Stacy spent £12 on ingredients for bread.

She made 15 loaves and sold them for £1.20 each.

Ca...

Mathematics, 02.12.2020 20:50, toricarter00

Stacy spent £12 on ingredients for bread.

She made 15 loaves and sold them for £1.20 each.

Calculate her percentage profit.

Answers: 2

Other questions on the subject: Mathematics

Mathematics, 21.06.2019 16:30, AutumnJoy12

Yoku is putting on sunscreen. he uses 2\text{ ml}2 ml to cover 50\text{ cm}^250 cm 2 of his skin. he wants to know how many milliliters of sunscreen (c)(c) he needs to cover 325\text{ cm}^2325 cm 2 of his skin. how many milliliters of sunscreen does yoku need to cover 325 \text{ cm}^2325 cm 2 of his skin?

Answers: 3

Mathematics, 21.06.2019 18:30, Luciano3202

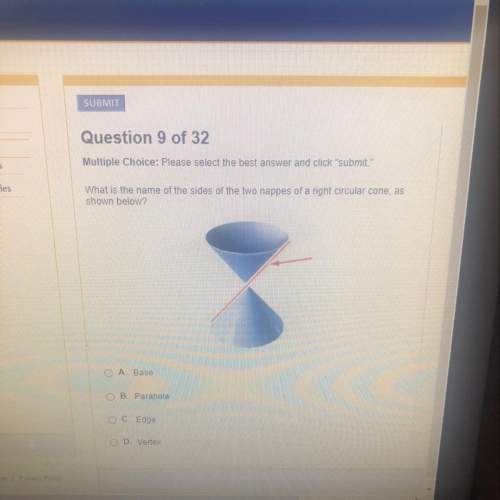

Identify the polynomial. a2b - cd3 a. monomial b. binomial c. trinomial d. four-term polynomial e. five-term polynomial

Answers: 1

Mathematics, 21.06.2019 20:00, Irenesmarie8493

The graph and table shows the relationship between y, the number of words jean has typed for her essay and x, the number of minutes she has been typing on the computer. according to the line of best fit, about how many words will jean have typed when she completes 60 minutes of typing? 2,500 2,750 3,000 3,250

Answers: 3

Do you know the correct answer?

Questions in other subjects:

History, 30.01.2020 20:43

Spanish, 30.01.2020 20:43

Mathematics, 30.01.2020 20:43

Biology, 30.01.2020 20:43

French, 30.01.2020 20:43

Biology, 30.01.2020 20:43

Mathematics, 30.01.2020 20:43

Mathematics, 30.01.2020 20:43