Physics, 17.07.2019 17:20, kaitlyn0123

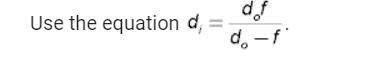

gradient descent: consider the code example discussed in class. the prediction function is 1 +ws and the loss function is y(w, i) t where t represents the vector of observed targets, and x represents the vector of observed features. (a) derive the gradient and hessian of l(wx), with respect to w b) implement them and re-run the example, playing around with the step size and starting values do you see how much work it took to get get newton's method to converge to something sensible? (c) modify the code to give you stochastic gradient descent. try this with different mini-batch sizes and starting values, to get a feel for how it works- particularly the stability of the algorithm with respect to these hyper-parameters.

Answers: 3

Other questions on the subject: Physics

Physics, 22.06.2019 12:30, mommer2019

Consider a system with two masses that are moving away from each other. why will the kinetic energy differ if the frame of reference is a stationary observer or one of the masses?

Answers: 1

Do you know the correct answer?

gradient descent: consider the code example discussed in class. the prediction function is 1...

Questions in other subjects:

Mathematics, 01.02.2021 09:50

Mathematics, 01.02.2021 09:50

Chemistry, 01.02.2021 09:50

Medicine, 01.02.2021 09:50

Social Studies, 01.02.2021 14:00